Chunhui Liu

Staff ML Engineer · TikTok Video Search

I'm a Staff Machine Learning Engineer at TikTok's Video Search Team, where I serve as tech lead for relevance and pretraining. I work with a group of talented individuals to build the world's largest short video search engine with tens of billions of videos, serving billions of users daily. My team focuses on developing BERT/LLM models as part of the search engine, utilizing advanced techniques in CV/NLP/Multimodal learning and pretraining.

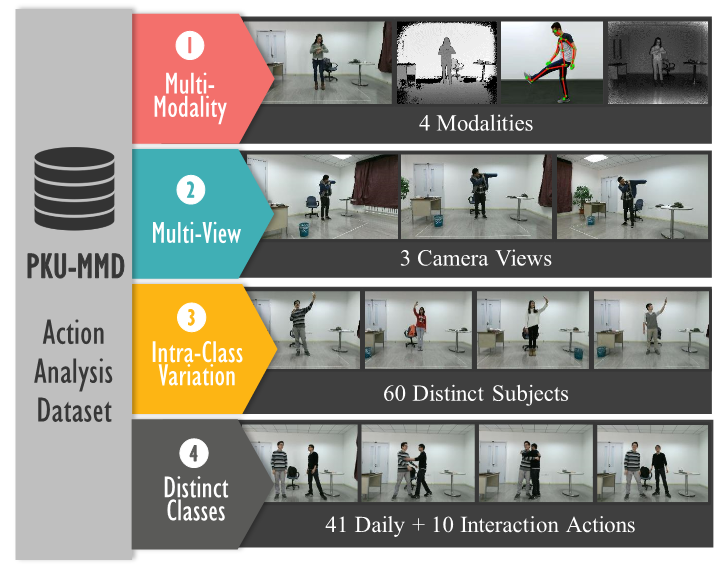

Previously, I was an Applied Scientist at Amazon AI, conducting cutting-edge research and developing real-world applications for video and action understanding. I hold a Master's degree in Computer Vision from CMU and a Bachelor's degree in Computer Science, Summa Cum Laude, from Peking University, under the supervision of Prof. Jiaying Liu.